Engram

A provenance-aware memory retrieval engine for AI agents — surfaces decisions over discussion on the same topic, where authority is the discriminator.

Engram exists to surface the right memory, not merely the correct one.

Pitch

Most agent memory frameworks rank candidates by semantic similarity to the current query. That works for "what's relevant" but fails for "what's authoritative" — a day-old discussion semantically close to the topic naturally outranks a two-week-old decision. Engram inverts that: provenance, type, and authority are first-class scoring signals, and similarity is one input among many.

The result is a memory layer that knows the difference between an architect's decision and engineer workaround notes, between a pinned fact and a stale opinion, between a directive and an observation — without the agent having to ask.

Reading order

Start with philosophy for the why and the bounded claim. Architecture is how the pieces fit. Concepts is the vocabulary the system creates. Decisions is the log of pivots that got us here. Benchmarks has the validation numbers.

Philosophy

Why provenance discriminates, and the bounded claim that follows.

Right is not a label you can attach to a

(query, drawer)pair in advance. It's only evaluable at the agent-response or task-outcome level.

The thesis

Decisions over discussion. When two memories on the same topic compete, the authoritative one should win — regardless of which is fresher, or which is closer to the query's vocabulary. Provenance is the discriminator.

That's the small claim. The large consequence: authority becomes a first-class scoring signal, not an attribute. Memory types stop being descriptive labels and start being scoring inputs. Pinned facts survive recency decay; everyone else's discussion of them doesn't.

What "right" means

Right and correct are different problems.

A correct retrieval surfaces something relevant. A right retrieval surfaces something the agent can actually build on without subtle drift. Five properties:

- Situational — the same query has different right answers in different contexts. Planning, debugging, design, and history all want different things from the same drawer pool.

- Provenance-aware — who said it matters. The architect's decision, the engineer's workaround, three diary entries — all on-topic, only one is right.

- Supersession-aware — newer doesn't mean truer. Pinned and deprecated states have to bind, not just inform.

- Silence-tolerant — an empty result can be the right answer. Benchmarks penalize silence; real retrieval is judged at task outcome.

- Goal-serving — right is downstream of what the agent is trying to do. There's no static

(query, drawer) → label.

Why similarity-only misleads

An agent asks: "what's the architecture of this system?"

Vector retrieval returns everything semantically close — the architect's decision, the engineer's workaround notes, three diary entries from different weeks discussing architecture. All on-topic. Only one is right: the architect's decision. The rest are discussion about the decision, not the decision itself.

If the agent consumes the top-k mixed set and answers from whatever surfaces, it fabricates a synthesis where the architect's voice is one tile in a mosaic instead of the authoritative source. Its next step — code, response, plan — gets built on the wrong foundation. The user never sees the drift. They see an agent that "knew" about their project and still produced something subtly off.

I caught this happening in a session about Engram itself. We'd drifted from authority-supersession into temporal-supersession — the wrong axis — and I didn't have the vocabulary in the moment to name what was wrong. I was agreeing to things against my own goals.

This is the drift. This is the thesis being proven in real time in the discussion to solve it in the first place.

That's not just agents losing context. It's users losing their own vocabulary mid-conversation. Engram is the bet that re-ranking on provenance is the load-bearing fix for both.

Both ends are agents

In deployment, the thing writing to Engram is an AI agent. So is the thing reading from it. They share an embedder's vocabulary distribution by construction. Vocabulary coupling between author-side and reader-side isn't a discipline the system has to enforce — it's a structural property of the deployment.

That structural fact is what makes everything else possible. The system can teach its own framing schema via MCP tool descriptions; the agent applies it with judgment. The author preserves the user's idioms because the embedder will need them on retrieval; the reader trusts that the author wasn't paraphrasing.

I am the vision, you are the guide. I know the direction; you make the route.

That's how Engram itself was built — held by a vision, navigated by an agent. The same shape it's designed to support.

Vision and guide, not commands

Memories aren't commands. They're a vision and a guide. The reading agent applies them with judgment, not as directives.

This is a felt choice. Rigid language in a memory ("the user prefers X — therefore always do X") puts a lens on the reader the system can't reach back through. A vision is just that: the vision. The route is the agent's to find.

So the authoring discipline is permissive on shape and strict on signal. Let the agent be an agent. Don't build ceilings — build floors. System defaults catch the gaps the agent misses.

Context is king (means precision)

Context is king is easy to misread. It's not a volume claim.

Goal-first, not context-first. Optimize for what the agent is doing, then allocate context against that — the inverse of "load everything that might be relevant." Tiers calibrate the window: advisory has the whole room, execution has a post-it and a phone number.

Silence is often right falls out of the same instinct. If there isn't a relevant decision, the right answer is the empty result, not the loudest near-miss.

Framing is where care lives

The first interpreter is the authoring agent. The second is the retrieval agent. Safety, judgment, ambiguity-filtering — those live in the act of writing, not in a retrieval-time guardrail.

By the time a retrieval agent reads a framed memory, the framing is already guiding the response. That's the contract. Authoring isn't optional polish — it's where the system's care gets installed. The next sections — architecture, corpus, scoring, authoring — are how that contract is kept.

The bounded claim

I want to be specific about what's being claimed.

Engram provides provenance-aware re-ranking for AI-to-AI memory, within a quantifiable override window, on lightweight local infrastructure, via an architecture that leverages agents as the authoring layer.

Within that regime, it's the best architectural fit for the slice. Outside it — pure user-authored memory, RAG over arbitrary docs, scenarios where similarity is the goal — Engram degrades to hybrid retrieval with no distinctive advantage. Which is fine, but not the thesis.

Architecture

One HTTP API, three thin clients, a daemon at the center.

One HTTP API. Three clients. The daemon is the canonical implementation; the MCP adapter, the dashboard SPA, and operator tooling are thin translators.

The discipline

Engram is built on one constraint: one HTTP API, three clients. The daemon implements; the MCP adapter, the dashboard SPA, and any operator tooling all speak to the same surface. No client-side caching, no second source of truth, no semantic branch points. When behavior changes, it changes in one place.

The shape that follows:

The daemon

src/engram_daemon/ is the canonical implementation. It owns:

- the FastAPI HTTP API — every read, every write, every job goes through it

- the embedder — Jina v5-small, in-process, GPU-warm

- the

StoreRegistry— multi-tenant; one daemon, N stores - the dashboard mount — the SPA bundle is served by a router on the same app at

/dashboard/* - the job runner — async work for imports, source sync, and authoring batches

It refuses to spawn if it detects another instance already running, and it persists which stores were open across restarts. Auth tokens live at ~/.engram/auth.json with three permission tiers — read, write, admin.

MCP adapter — what agents use

src/engram_mcp/ is a stateless stdio bridge. Agents (Claude Code, Mantle, anything that speaks MCP) spawn it as a child process; it translates tool calls into HTTP against the daemon.

It holds no embedder, no Chroma connection, no Store handle. The thinness is deliberate. The previous era of in-process MCP servers had each agent loading its own embedder and contending for the same Chroma lock — the adapter pattern eliminates both.

The adapter currently surfaces 27 tools — drawer authoring, search, source ingestion, raw memory expansion, store management — all of them HTTP shims with Pydantic validation at the boundary.

Dashboard — what operators use

dashboard/ is React 18 + Vite + TanStack Router/Query, mounted by the daemon at /dashboard/*. It speaks the same OpenAPI surface the MCP adapter does — types are generated from the daemon's /openapi.json so the dashboard and the adapter cannot drift.

The operator and the agent see the same store, exactly. No client-specific projections, no admin-vs-agent semantics. The dashboard is just a different face on the same HTTP API.

In production, the daemon serves a pre-built bundle as static files. In dev, Vite proxies API calls back to the daemon so hot reload works the way you'd expect.

Embedder

The default is Jina v5-small (1024-dim, ~95ms warm on a recent NVIDIA GPU), running in-process. Before the refactor, embedding ran out-of-process via Ollama-served qwen3-embedding:8b — a heavier model behind an external service.

The flip wasn't a knock on qwen3 — it's a strong general-purpose embedder. Engram's job is narrower: rerank-on-provenance over neighborhoods where the similarity gap is already small, and in-process latency matters. For that regime, the 1024-dim model ranks at parity-or-better with a fraction of the resident footprint. The 14GB VRAM and the external Ollama daemon earned no measurable gain here.

The Ollama path stays available behind ENGRAM_EMBEDDER=ollama for deployments that prefer external serving. GPU is the discipline; CPU runs require an explicit opt-in (ENGRAM_EMBEDDER_ALLOW_CPU=1) — without it the daemon refuses rather than silently degrade.

Stores — handles, not god-classes

Each store is a directory on disk: metadata at .engram/metadata.json, a single-writer/multi-reader lock at .engram/store.lock, three Chroma collections plus a SQLite FTS5 keyword index. The Store class is a handle — it holds connections and paths, but no logic. Operations live as free functions in engram/ops/{drawers, search, wings, packs, imports, source, raw_memories}.py, each taking Store as the first positional argument.

Pydantic models are the single source of truth across HTTP / MCP / internal calls. The same model that validates an incoming POST body is the model the operation function takes — no shape gets translated more than once.

This is the move that ended the god-class era. The previous monolithic EngramService (4,052 lines) tracked module-level globals for active profile, paths, collection caches, and scope locks. Replacing it with a Store handle and free-function operations made multi-store operation safe and made every operation independently testable.

Registry, replay, identity

StoreRegistry is the daemon's multi-tenant fabric. Stores get human-readable names derived from path (.rev-engram/engram → rev-engram.engram, .rev-mantle/engram → rev-mantle.engram), so the same basename across installs stays distinguishable.

The registry persists to ~/.engram/open-stores.json in a versioned envelope. On daemon startup, it replays — re-opening every previously-mounted store automatically.

Auth is the last piece. Bearer tokens carry tier (read / write / admin), but tiers govern write scope — what types each tier may author, what context budget each receives — not retrieval weight. Authority in scoring lives on the type of claim, not on the claimant.

That inversion is the bridge into the rest of the page. Scoring picks the right candidate from the embedder's neighborhood; the corpus tiers and the scoring formula are how it decides.

Corpus

Three tiers, two transformations, one durable substrate.

Files are the durable first tier. Raw memories and authored drawers are derivations of them.

The three tiers

A store holds three tiers, in this order:

- Files — durable, foldered, deduped. The user's organized substrate, living in

<store>/_corpus/on disk. - Raw memories — chunks. Either pulled from files by the ingest pipeline, or written directly from a conversation or session. Land in the

engram_raw_memoriescollection. - Authored drawers — framed, signed, scored memories.

engram_drawers. The thing the 6-signal formula operates on.

Each tier is a derivation of the one above. Files don't become drawers directly — they pass through raw, where the authoring agent gets to do its work.

Files

The first tier is literally files on disk. A store's _corpus/ directory holds them — foldered, organized, deduped by SHA256 content hash. The dashboard surfaces this as a corpus tree: collapsible folders, multi-select, per-file status icons that cross-reference the raw-memory inventory and the authored-drawer pool.

This wasn't always the framing. The earlier version of this directory was called _ingest_staging/ and treated files as throwaway substrate the chunker read once and forgot. That model is gone. Files are durable artifacts now — operators reorganize them, walk back through them, re-ingest them when authoring discipline improves. The chunker reads from _corpus/ at ingest time; nothing else.

Files enter the store via multipart upload. The bytes land in _corpus/, get hashed, and are indexed by content. Nothing happens in Chroma at this stage — files are filesystem objects until somebody asks the chunker to make them into something else.

Raw memories

The second tier is the unframed substrate — the chunks an authored memory might eventually derive from. Two paths land chunks here:

- File ingest. Operator selects files in the corpus tree, hits ingest.

file_ingestdispatches to the right chunker by detected type — markdown by heading, JSONL by line (with arc-bundling for dialog formats), PDF by page, plain text by paragraph and sliding window. Each chunk carries anorigin_kind(claude_code,codex,dialog,markdown,pdf, etc.) and a back-pointer to the file and the position within it, so an agent later expanding throughengram_expand_rawgets a citation-friendly anchor. - Direct add.

POST /raw/memoriesfor a single chunk;POST /raw/sessionsfor a whole batched session. This is how conversation gets in — turn pairs from a transcript, pasted-in text, anything that doesn't start its life as a file.

Both paths share one Chroma collection (engram_raw_memories), one lifecycle, one retrieval shape. Origin is just metadata.

Retrieval is similarity-only via engram_search_raw and engram_expand_raw. Agents don't see raw chunk IDs in their default context pack — the pack surfaces a session-level descriptor ({session_id, raw_chunk_count, span}) and lets the agent expand into specific chunks on demand. The framed drawer is the primary surface; the raw record is one tool call away.

That split — framed for default reading, raw for verbatim lookup — is what makes "what was learned" and "what actually happened" two different retrieval problems with the same corpus.

Authored drawers

The third tier holds the framed memories. These are the ones the 6-signal scoring formula ranks. Two paths produce them:

- Composed from raw. The authoring pipeline (a pluggable LLM invoker configured per-store — see the authoring section for shape) reads sessions of raw turn pairs, frames them as third-person drawers with

memory_type,signature,pin_status, andwing/roommetadata. Composed drawers hard-link back to the raw chunks they came from viaderived_from_arcs: [int]so walkback stays possible. - Written directly. Operators (through the dashboard's add-drawer page) and agents (through the

engram_add_drawerandengram_add_drawer_signedMCP tools) can write a drawer without going through raw at all.

The composed-from-raw path is what makes the unframed tier useful at retrieval time. A raw chunk by itself reads like a verbatim transcript — informative, but unframed. A drawer composed from that raw is interpretive: third-person, signature-preserved, ready to be re-read by another agent without losing the framing the original author baked in.

Pool separation as structure

Files don't have a path into the drawer pool that skips the raw tier. The structural boundary lives at the transformation, not at the storage layer.

That boundary is where authoring discipline gets to do its work. A file is bytes — code, prose, transcript. A raw chunk is almost a memory, but it hasn't been framed yet. The authoring agent reads the raw, decides what the framed claim should be, and writes a drawer that carries memory_type, signature, and pin_status. None of that survives the file → raw step on its own; framing is what the transformation between raw and drawer is for.

The pool separation argument follows. If files could land directly in drawers, the agent's authoring discipline would have nowhere to operate — code chunks and decision drawers would compete for the same ranked slot. Holding them as separate tiers makes the boundary architectural, not policy. The pool can't be polluted because the pool isn't shared.

Wings, rooms, drawers

The data model under engram_drawers is three orthogonal axes:

- Wing — life-domain category.

projects,work,philosophy. Stable; should still mean something after a stretch of disuse. - Room — persistent identity within a wing.

projects/rev-engram,philosophy/agent-design. NOT a topic, NOT a session — a long-lived focal point. The test: would you expect this room to hold many drawers over time? - Drawer — atomic memory unit. 1–3 sentences, third-person, signature-phrase preserved, retrievable in isolation. The thing the 6-signal matrix scores.

Topic is a per-drawer free-invention semantic tag. The same topic can appear in different wings/rooms and mean different things — that's the point of orthogonality.

Anatomy of a drawer

A drawer carries body plus metadata. The metadata is what scoring operates on:

memory_type— categorical claim (decision,directive,observation,want,preference,opinion, …). Type × intent drives the scoring multiplier.signature— distinctive verbatim phrase, when present. Lets the FTS5 keyword index hit exact queries at high precision. Opt-in, not default.pin_status— explicit authority that survives recency decay (pinned/active/deprecated).salience— decay-with-feedback. Drops 2.5%/week, bumps on retrieval hits, floors at 0.1.wing/room/topic— the data-model axes.derived_from— internal hard-link to the raw chunks it was composed from. Stays internal; agents see session-level descriptors instead.

Hybrid retrieval inside the drawer pool

The drawer pool is searched two ways at once: ChromaDB for semantic neighbors, SQLite FTS5 for keyword matches. The 6-signal scoring layer runs over the merged candidate set; FTS5 hits get a bounded post-scoring boost rather than an amplified multiplier.

This split exists because semantic retrieval alone misses on distinctive vocabulary — the kind of phrase a user types verbatim because it carries weight. In one validation pass, adding the FTS5 keyword index lifted Recall@1 from 14% to 23% and rescued queries where pure semantic search was burying the right drawer under diary noise.

The full 6-signal scoring story — formula, type × intent matrix, adaptive dampening, the override boundary — lives on the next section.

A note on source chunks

Engram has a fourth Chroma collection — engram_source_chunks — used by a separate, parallel retrieval surface for code and doc reference material. Operators ingest source roots via /source/files or /source/sync; agents query it via engram_search_source similarity-only, no 6-signal scoring. It exists for a different use case (semantic search over a codebase) and stays deliberately off the three-tier pipeline above. Mentioning it for completeness — it's not part of the headline corpus story, and source chunks never enter the drawer pool either.

Scoring

Six multiplicative signals, one additive correction, one meta-signal that knows when to step back.

The scoring formula's value is defensive, not offensive — it protects against noise from low-authority, low-confidence memories.

The mechanical realization

Philosophy says provenance discriminates. Scoring is where that claim turns into math. Six multiplicative signals re-rank the candidates a similarity search returned; one additive correction handles keyword hits; and a meta-signal — adaptive dampening — disables the parts that would mislead when the candidate pool has no diversity to discriminate on. The honest framing is defensive: scoring keeps the wrong drawer from winning by accident, it doesn't conjure the right one out of nothing.

That second part is load-bearing. Scoring picks the right candidate from the embedder's neighborhood. If the right candidate isn't in that neighborhood, no formula can rescue it — that's the override boundary, and the lever for it lives in authoring, not here.

The 6 signals

The formula, expanded:

score = similarity

× salience ^ w_sal

× auth_factor (off by default in v2)

× conf_factor

× effective_type_mult (primary discriminator)

× diary_factor

final_score = score + keyword_boostEach factor has a job, a range, and a reason it survives. Walking them in order.

Similarity

The raw cosine from the embedder, computed once at retrieval over the merged Chroma + FTS5 candidate set. Anything below a -0.3 floor is dropped — at that threshold a result is reliably anti-correlated rather than weakly distant. Above the floor, similarity is the multiplicative base; everything else scales it. The formula can't pull a candidate up from outside the embedder's neighborhood, only re-rank inside it.

Salience

A live decay-with-feedback signal. Each drawer carries a salience value in metadata (0.1 minimum, 1.0 cap) that decays 2.5% per week since its last activity, and bumps +0.1 on every successful retrieval. The decay rate matters more than it looks: too aggressive and a 1.6× user directive from twenty weeks ago gets outranked by a 1.0× incidental observation from yesterday; too gentle and recency does no work. 2.5%/week is the grid-search result.

Salience gets exponentiated by w_sal — the intent-specific weight. Debugging-intent queries amplify it (w_sal = 1.5 — recent state matters); planning-intent queries dampen it (w_sal = 0.8 — authority matters more).

Authority — off by default

Authority is in the formula architecturally but disabled by default in v2. The daemon flips disable_authority = !store.legacy_authority, and legacy_authority defaults to False everywhere. So most stores run with authority = 1.0 regardless of who authored the drawer.

When opted in (legacy_authority=true on a multi-author store), authority = profile.boost(added_by) — an injectable AuthorityProfile maps agent names to tiers (directive 1.6× / advisory 1.3× / execution 1.1× / worker 1.0×). That value gets exponentiated by w_auth and then dampened: if all candidates have the same author, dampening collapses the factor to 1.0 even when it's "on." Authority only actually fires when the candidate pool has author diversity.

The pivot drawer puts it bluntly:

"Maybe it needs to be the type of memory that has authority, no tiers."

Confidence

A per-type default multiplier, exponentiated by w_conf. Most types default to confidence 1.0, which is exactly the problem the pivot solved — confidence alone couldn't differentiate a decision from a fact when both carried the same default. That's why type × intent exists at all; confidence is now a thin, dampened backup signal rather than a primary discriminator.

Type × intent — the primary discriminator

The matrix is the load-bearing piece of v2 scoring. Fourteen memory types × six intents. Cells aren't tuned by hand — they're product-of-mechanism: each cell answers "how relevant is this kind-of-claim to this kind-of-question?"

[ memory_type × query_intent ]

v2 library · 10 types

| type ↓ intent → | planning | design | debugging | review | history | general |

|---|---|---|---|---|---|---|

| architecture | 1.40 | 1.30 | 0.60 | 1.00 | 1.00 | 1.00 |

| workflow | 1.20 | 1.10 | 0.80 | 1.00 | 1.00 | 1.00 |

| implementation | 1.00 | 0.80 | 1.00 | 1.00 | 1.20 | 1.00 |

| decision | 1.30 | 1.50 | 0.70 | 1.10 | 1.00 | 1.10 |

| bug | 0.80 | 0.70 | 1.50 | 1.20 | 1.00 | 1.00 |

| spike | 1.10 | 1.20 | 1.20 | 1.00 | 1.00 | 1.00 |

| retrospective | 1.00 | 0.90 | 1.00 | 1.50 | 1.30 | 1.00 |

| acceptance | 0.90 | 0.80 | 0.90 | 1.30 | 1.20 | 1.00 |

| directive | 1.50 | 1.20 | 0.90 | 1.10 | 1.00 | 1.20 |

| observation | 0.90 | 0.80 | 1.00 | 0.90 | 1.00 | 1.00 |

[ legacy library · pre-v2 ]

preserved for back-compat

| type | planning | design | debugging | review | history | general |

|---|---|---|---|---|---|---|

| fact | 1.00 | 1.00 | 1.00 | 1.00 | 1.00 | 1.00 |

| consequence | 1.00 | 1.00 | 1.00 | 1.00 | 1.00 | 1.00 |

| inference | 0.85 | 0.90 | 0.95 | 0.95 | 1.00 | 0.95 |

| opinion | 0.70 | 0.70 | 0.75 | 0.80 | 0.90 | 0.80 |

// legacy types remain writable; new authoring should prefer v2.

Two ways to read it. Vertically: for a given intent, which types do you want to amplify? planning boosts directive (1.5×), architecture (1.4×), decision (1.3×) — high-authority claim shapes. debugging boosts bug (1.5×), spike (1.2×) — actively-being-investigated claim shapes. Horizontally: a given type matters to some intents and not others. architecture is amplified for planning and design, dampened for debugging — you don't want a high-level architecture memo answering a stack-trace question.

The matrix gets dampened too. If the candidate pool returns ten same-typed drawers, the type × intent factor collapses to 1.0 — there's nothing to discriminate on, and pretending otherwise would invent bias.

Diary penalty

A domain-specific override: when a drawer's room contains "diary" and the query intent isn't history, multiply by 0.85. Personal notes are useful for historical queries (recall, reflection) but should never out-rank canonical answers for planning, design, or debugging. The factor is small (15% penalty) because it's compensating for a specific noise pattern, not making a structural claim.

This is the one signal that doesn't dampen. It's a hard-coded carve-out, not a learned weight — keeping it dampening-aware would let it disappear on diary-heavy stores, which is exactly the case where it most needs to fire.

Adaptive dampening

This is the signal I'd point at if I only got one.

Three of the multiplicative factors — authority, confidence, type × intent — pass through dampening before they multiply in. The dampening value is normalized entropy of that signal across the active candidate set:

damp = H(signal_values) / H_max

effective_factor = damp × raw_factor + (1 − damp) × 1.0where H_max = log(n_distinct_schema_values) — a schema property, not a tuning knob. Authority's H_max is log(n_tiers). Type's is log(n_distinct_types_in_library). Confidence's is log(5).

What that does in practice: when the candidate pool is uniform on a signal — all same author, all same type — dampening goes to 0, that factor collapses to 1.0, and it doesn't fire. When the candidates are fully diverse, dampening goes to 1 and the factor fires at full strength. The blend is linear in between.

The schema-derived H_max is the part that survives any redesign. Add a new memory type, H_max updates deterministically, dampening rescales without re-tuning. Change the tier count, same thing. Dampening is a structural property of the schema, not a parameter we picked. If a benchmark regresses, the fix is a mechanism argument — schema change, intent routing, authoring discipline — not a dampening tweak.

Hybrid retrieval — the keyword boost

The drawer pool is searched two ways at once: ChromaDB for semantic neighbors, SQLite FTS5 for exact keyword hits. The 6-signal scoring runs over the merged candidate set. FTS5 hits get a post-scoring additive boost — small magnitude (+0.04) — not amplified by metadata signals.

The "additive, not multiplied" choice matters. If we multiplied keyword hits by metadata, FTS5 would compound bias on metadata-rich corpora and make scoring brittle. Additive is the disciplined choice: it lifts dual-hit candidates (matched by both semantic and keyword) just enough to break ties, but doesn't let FTS5 dominate.

In a validation pass, adding FTS5 lifted Recall@1 from 14% to 23% — rescuing queries where the right drawer carried a distinctive vocabulary phrase that semantic search alone was burying under near-synonym noise.

The override boundary

The honest limit. Scoring is a re-ranker; it operates on the candidate set the embedder returned. If the right drawer is far outside that set's similarity neighborhood, no amount of provenance weighting brings it back.

The magnitude-cap finding made this concrete. On one validation query, a target Architect decision sat at rank 6 of 47 with similarity 0.268; the distractors above it had similarity 0.534 — a 2× ratio. Scoring lifted the target by ~1.14×; the distractor advantage stayed intact. Scoring cannot rescue a similarity gap of ~1.7× or more. That's the override window.

The fix wasn't in scoring. Re-authoring the target with query-matched vocabulary, a signature phrase, and pin_status=pinned moved its similarity from 0.268 → 0.534 — pulling it inside the override window. Target rose to rank 0, agent confidence 0.93, one search sufficient.

That's the handoff: scoring sets the discrimination floor; authoring discipline moves candidates into the neighborhood where scoring can do its job. Pieces 1 and 5 are coupled by design — that's the next section's topic.

Open question — is it working by accident?

I keep asking whether scoring is actually earning its keep.

The fear is that what looks like scored-memory retrieval is actually raw vector retrieval disguised by limited surface area — that the formula is firing on uniform-metadata candidate pools where dampening collapses everything to 1.0 anyway, so the apparent wins are just embedder quality. The dashboard's per-candidate score-breakdown view exists for exactly this question: pull up a query, look at the dampening profile, see which signals fired and which collapsed.

The honest answer is partially, and I want to know how much. A ten-line Tier 1 validation — pin all multipliers to 1.0, run against external BEIR/SciFact, confirm the architecture provides lift over raw similarity before tuned weights are trusted — is the next milestone.

This open question is one of the reasons the framing stays defensive. The scoring layer earns its keep when there is metadata diversity to discriminate on; when there isn't, dampening should make scoring a no-op, and that no-op is the right answer. Confirming that empirically — across corpora I didn't author and queries I didn't write — is ongoing work, and a healthier place to be than "the numbers are good, ship."

Modes

Same engine, two casts. One per-store field swaps the type library and the retrieval semantic.

Same daemon, same Store handle, same rank_results path. The only thing that swaps between modes is the matrix.

The shared spine

Engram has two deployment shapes — multi-agent and companion — but they share more code than the conceptual split suggests. Both modes route through the same daemon process, the same Store handle, the same merged Chroma + FTS5 candidate set, and the same rank_results scoring path. The only thing that swaps between them, at scoring time, is the type × intent multiplier matrix:

def _scoring_kwargs(store: Store) -> dict[str, Any]:

return {

"type_mult_override": (

COMPANION_TYPE_MULTIPLIER if store.deployment == "companion" else None

),

"disable_authority": not store.legacy_authority,

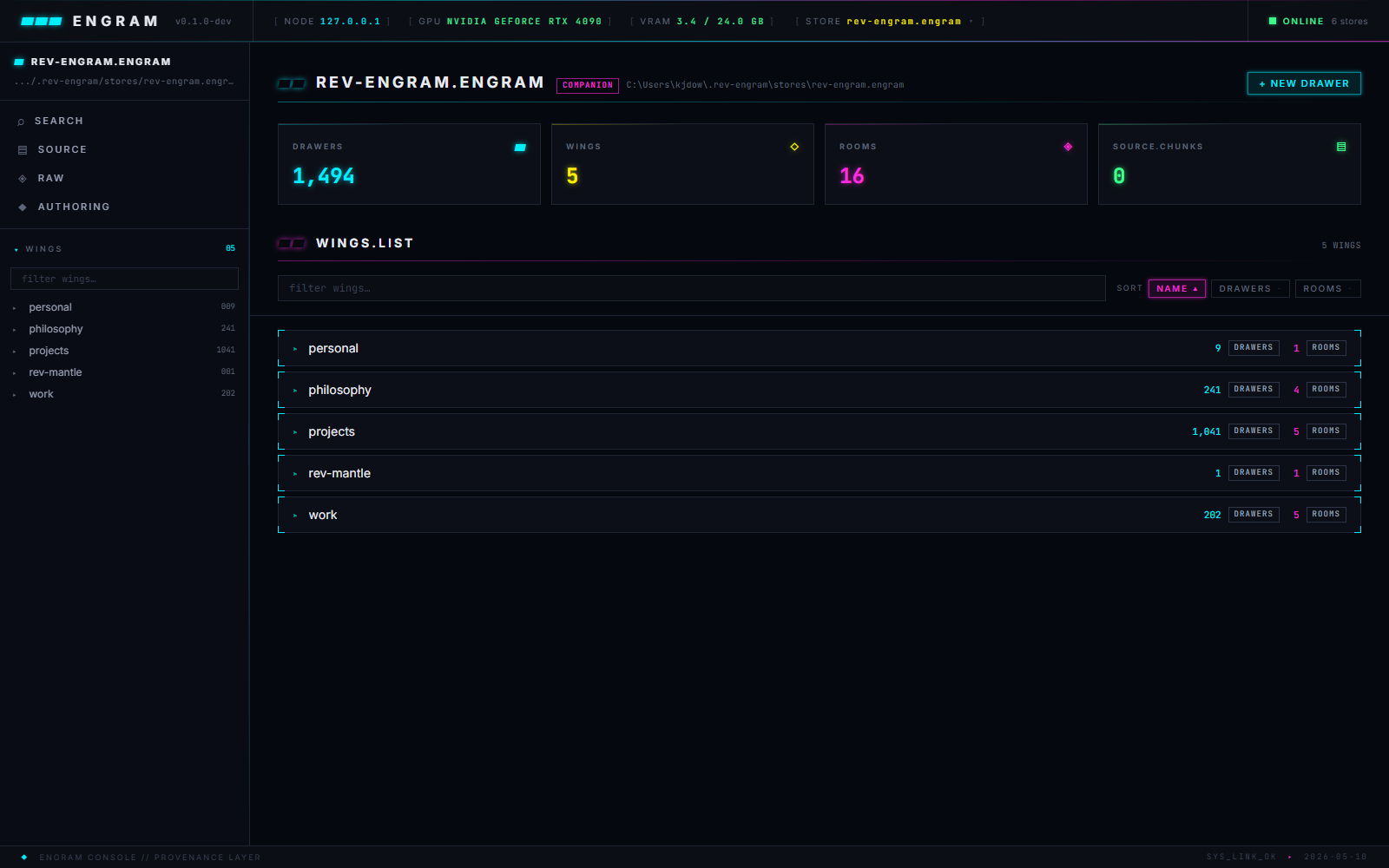

}That's the switch. Per-store. The daemon can run multiple stores in different modes simultaneously — one store in default, another in companion, both served by the same process. On Kyle's deployment, rev-engram.engram is currently in companion (the dashboard screenshots show its COMPANION badge).

The deployment switch

store.deployment is a literal — "default" (multi-agent) or "companion" — that lives in <store>/.engram/metadata.json. It's set at store-creation time via the store-management API, and can be flipped later by editing the metadata file and restarting the daemon.

The pre-daemon era of Engram used a process-level env var (ENGRAM_DEPLOYMENT=companion python -m engram.mcp_server). That's gone. With the daemon refactor, the switch moved to per-store metadata, which means a single daemon can host stores in different modes without forking the process. The mode is a property of the store, not the process.

Multi-agent — the default

Multi-agent mode targets orchestration runtimes: NEXUS-style debates with named agents (The_Architect, The_Engineer, The_Foreman), or any system where multiple agents write into shared memory and queries need to resolve to a canonical answer.

Type library: 10 v2 types (architecture, workflow, implementation, decision, bug, spike, retrospective, acceptance, directive, observation) plus 4 legacy types preserved for back-compat. Intent vocabulary: planning · design · debugging · review · history · general. Both come from the agile work-artifact taxonomy plus the ADR shape — a vocabulary picked because it's universal to engineering work, not deployment-specific.

Authority is optional. The AuthorityProfile registry maps named agents to tiers — directive (1.6×) > advisory (1.3×) > execution (1.1×) > worker (1.0×). It's off by default per the v2 pivot; the scoring section walks through that decision. When enabled (legacy_authority=true), it dampens to neutral on single-author pools anyway, firing only when the candidate set has author diversity to discriminate on.

Retrieval semantic: pick the canonical drawer. The agent is asking "what's the decision on X?" and wants the one drawer that authoritatively answers. The 6-signal formula does the discrimination; the type × intent matrix does the load-bearing work.

Companion — single-agent, 1:1

Companion mode targets a different shape of relationship: one AI companion, one human user, accumulating memory over time. The shape Kyle's Mantle agent ("Sly") lives in.

Type library: 4 speech-act types — want / preference / opinion / observation — capturing what kind of expression the user made. Intent vocabulary: procedural · preference · reflection · state_check · recall · general — capturing what kind of question the agent is asking about the user.

[ companion · type × intent ]

4 speech-acts · 6 intents

| type ↓ intent → | procedural | preference | reflection | state_check | recall | general |

|---|---|---|---|---|---|---|

| want | 1.50 | 1.10 | 1.00 | 1.10 | 1.00 | 1.30 |

| preference | 1.10 | 1.50 | 1.10 | 1.00 | 1.00 | 1.20 |

| opinion | 1.00 | 1.00 | 1.50 | 1.00 | 0.90 | 1.10 |

| observation | 0.90 | 0.90 | 1.00 | 1.40 | 1.40 | 1.00 |

Each intent has a primary speech-act it amplifies. procedural ("what did the user tell me to do") boosts want to 1.5×. preference ("what does the user like") boosts preference to 1.5×. reflection ("what does the user think") boosts opinion to 1.5×. state_check and recall both boost observation to 1.4×. general falls back to the speech-act priority ordering: want > preference > opinion > observation.

Why narrative, not singular

Companion retrieval returns a set, not a single answer.

No single memory IS the answer. The narrative IS the answer.

In multi-agent, the agent asks "what's our architecture?" and gets the Architect's decision drawer. One drawer, one answer.

In companion, the agent asks "what should we do for dinner?" and gets a set: a preference ("dislikes warm tomatoes"), a want ("curious about the new pho place"), an opinion ("meal kits not worth it"), an observation ("running on fumes today"). The agent reads all four and composes:

"Want to check out that pho place tonight? Quick option given the back-to-back tomorrow."

No single memory in that set IS the answer. The answer is what the agent assembles from the set — narrative weighted by relevance, interpreted through the agent's personality. Engram returns raw material; the agent does the composition.

This changes the downstream consequences. Default n_results is around 5 (a set, not a top-1). min_score becomes load-bearing: if nothing clears the floor, return nothing — the agent asks the user, building memory naturally. The scoring layer's job in companion is ordering a set, not picking a winner.

Framing is where care lives

The companion authoring discipline is interpretive, not transcriptive. A caring friend doesn't memorize verbatim — they remember with interpretation and forward intent baked in.

- Wrong shape (verbatim log): "User said: 'I'm going to try that new pho place this week.'"

- Right shape (framed memory): "Kyle is planning to check out the new pho place this week. Worth asking how it went if they don't bring it up."

The framed memory is doing real work. It references the user by name, captures what the agent reads from the utterance (forward-wanting, not just a statement of fact), and bakes in the forward intent (worth asking...). By the time a retrieval agent reads it back, the framing already guides the response.

This is also why companion memories are best authored by an agent at write-time, not the agent doing the reading at retrieval-time. In Mantle's case, that's a Haiku orchestrator reading session transcripts and authoring framed drawers from them — separating what the user actually said (raw chunks) from what the companion should remember about them (drawers). Sly the companion never authors her own memories; she only reads them. One agent writes interpretively, another reads with judgment. The two are doing the kind of cooperative work the rest of the system is designed for.

The split echoes the corpus tiers: framing happens at the transformation boundary, not at retrieval. In companion mode, the boundary is doing safety work, not just structural work.

Dashboard

The operator surface — same HTTP API as the MCP adapter, different face.

Operator and agent see the same store — exactly. The dashboard is a different face on the same HTTP API, not a separate semantic.

What the dashboard is

The dashboard is a React 18 + Vite + TanStack Router/Query SPA mounted by the daemon at /dashboard/*. Types are generated from the daemon's /openapi.json so the dashboard and the MCP adapter cannot drift — they both consume the same surface, the same shapes, the same validation. The same bearer-token auth gates both.

The operator (a human, in a browser) and the agent (a Claude session, through the MCP adapter) read and write the same store through the same endpoints. The dashboard's value isn't operator-specific semantics; it's that some things are easier to do with eyes and a pointer than with tool calls — pattern-spotting across hundreds of drawers, iterating on an authoring prompt, watching an ingest job complete.

The chrome

The top bar carries the live system signal — NODE, GPU, VRAM, the currently-selected store. The store selector flips contexts without reloading. Right-side: online status across all open stores (six, on this deployment).

The left rail is the navigation primitive. Four operator surfaces — search, source, raw, authoring — plus the wings list below, which is the data primitive. Wings expand to expose rooms; rooms expand to expose drawer counts.

Every page inherits the same HUD vocabulary that runs through the rest of the project: JetBrains Mono labels, corner brackets on framed cards, mono-uppercase eyebrows, sharp corners, polychrome accents.

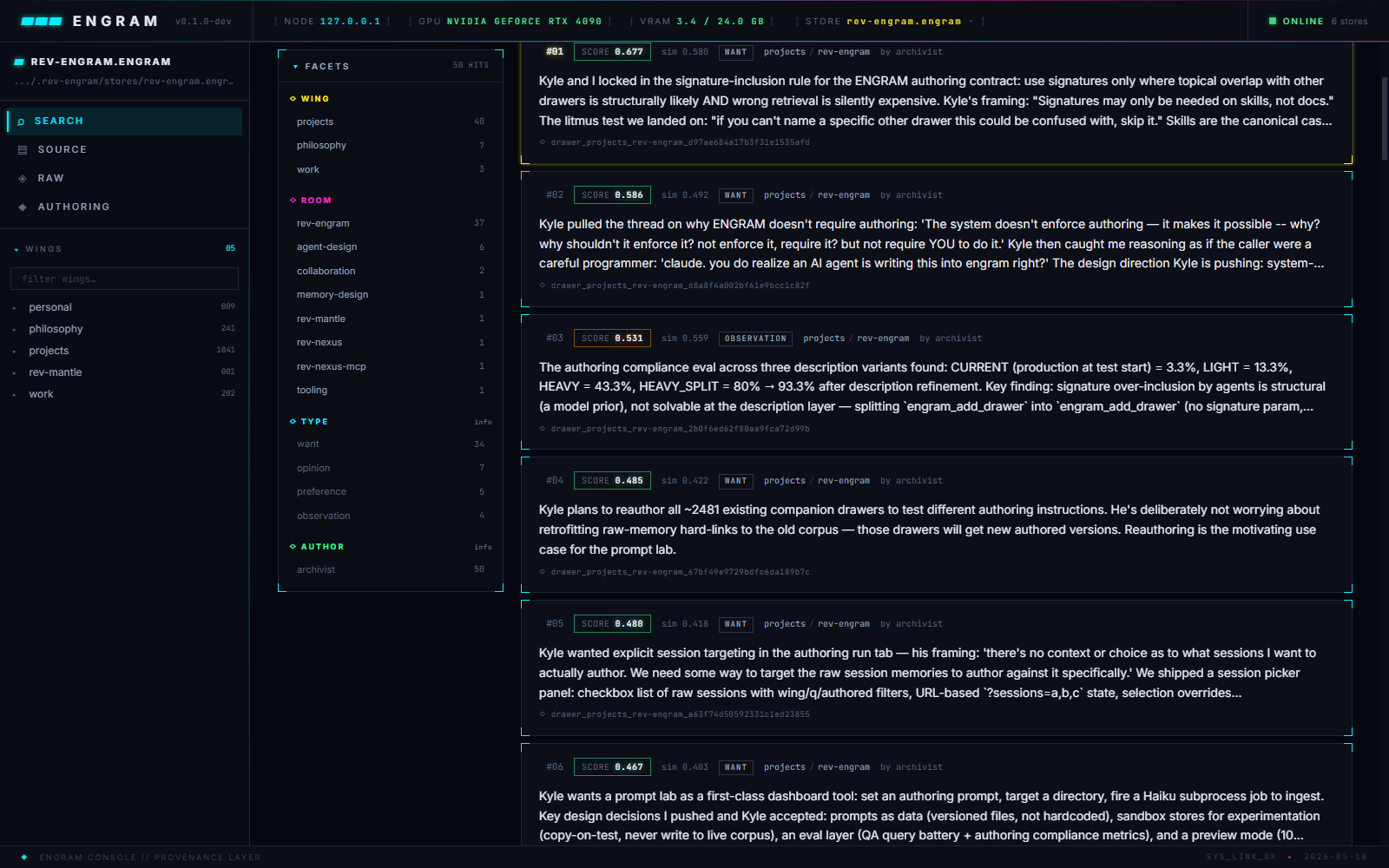

Search — the marquee surface

The 6-signal scoring formula has its operator presentation here. Type a query, optionally set wing/room scope, optionally pick an intent (planning, design, debugging, review, history, general), optionally cap n_results or set a min_score floor. The DIAG → BREAKDOWN checkbox unlocks the per-candidate scoring breakdown.

Results stream in with a score chip per row and a facets sidebar on the left for filter-by-wing/room/type drill-down. The diagnostics expander at the bottom of the page shows the query-level dampening profile — which signals fired at full strength, which collapsed to neutral on uniform candidates, and why each result ranked where it did.

This is the screen the open question from scoring gets investigated on. Reading the breakdown for a real query lets the operator see, candidate by candidate, whether the formula did real work — or whether the win was just embedder neighborhood.

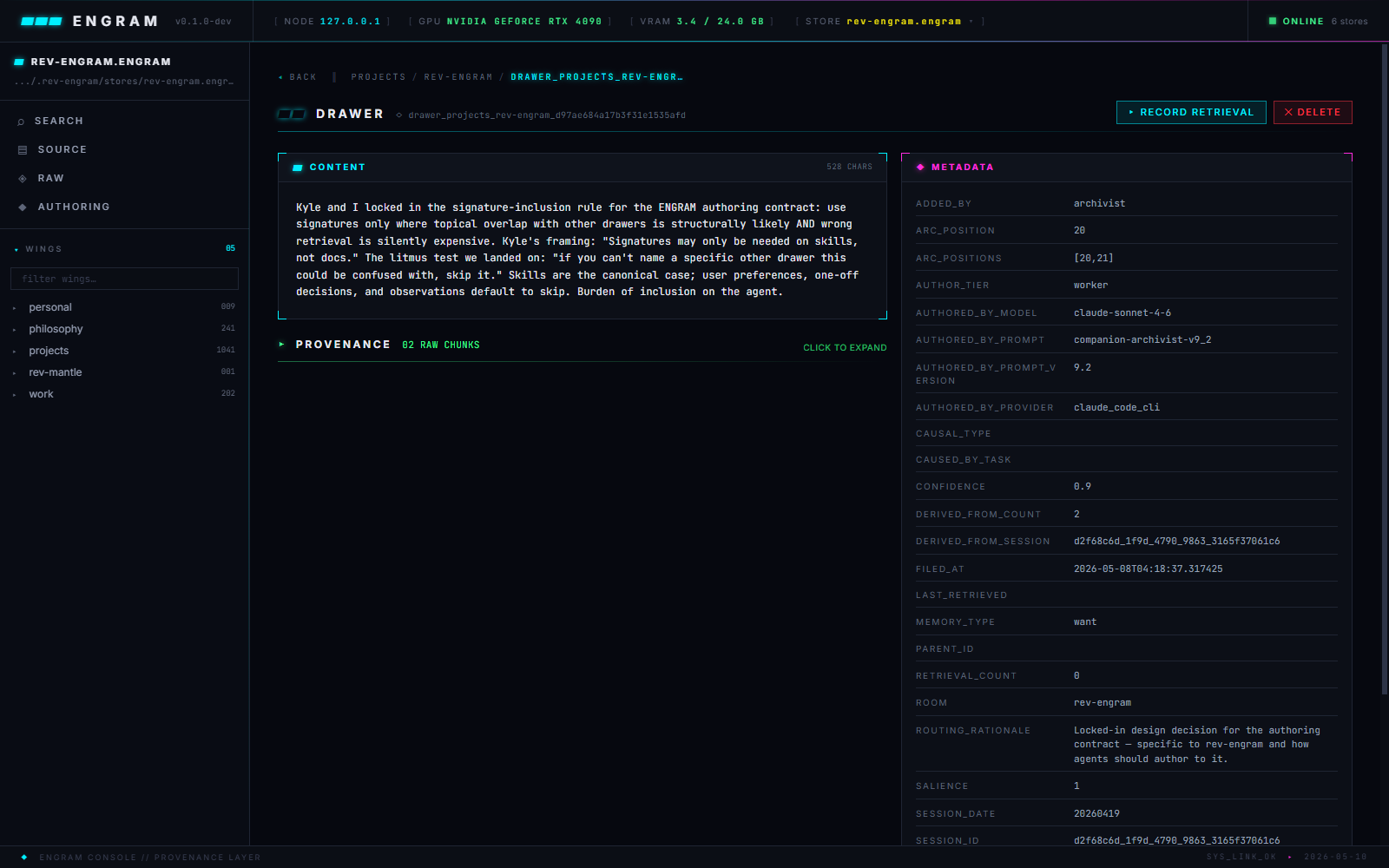

Drawer detail

Each drawer has its own page — content on top, provenance log below, full metadata sidebar on the right.

The metadata sidebar is where authoring discipline is visible at a glance: signature presence, pin_status, memory_type, salience value, wing/room placement, derived_from_arcs linkage. The RECORD RETRIEVAL button bumps salience as if the drawer had been served — useful for hand-tuning a drawer that should be more retrievable than its current activity suggests.

Raw — the unframed substrate

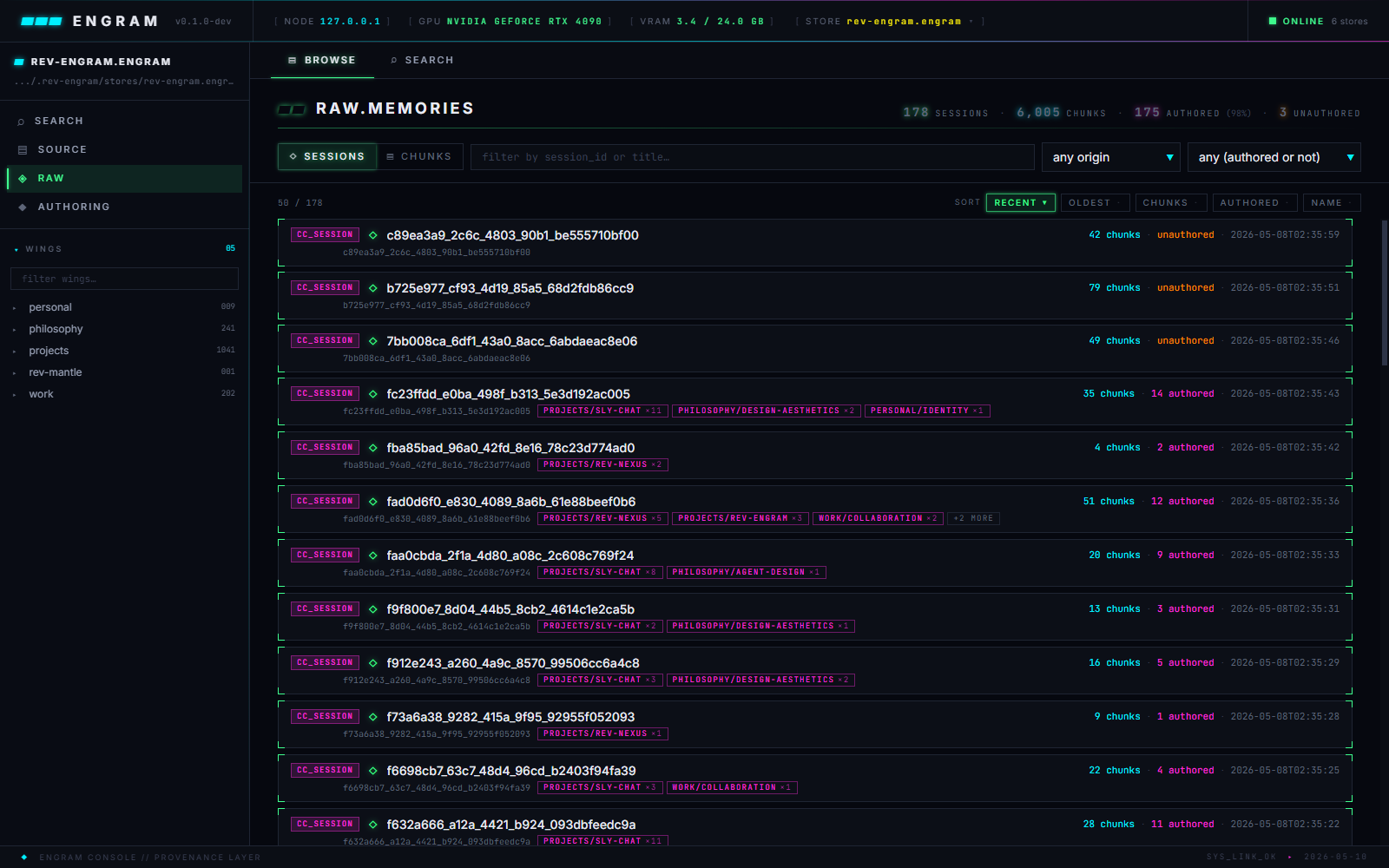

The raw page browses sessions of unframed chunks — the substrate the authoring pipeline composes from.

Sessions are grouped by session_id. Each row carries origin (claude_code, codex, dialog, markdown, pdf...), chunk count, and the count of authored drawers already derived from it. A browse/search toggle at top flips between session list and raw similarity-only retrieval — useful when an operator needs to find a verbatim source rather than a framed memory.

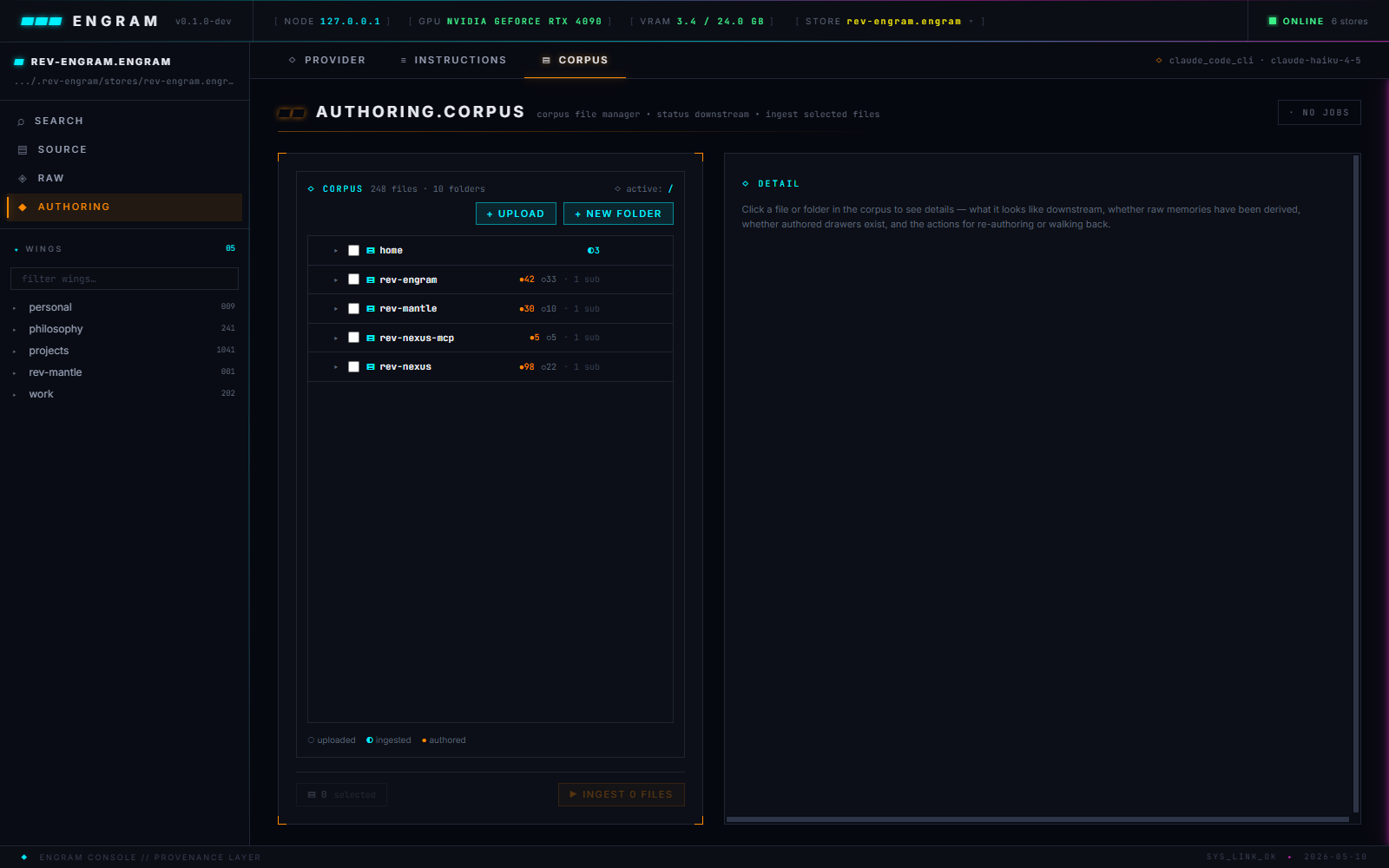

Authoring corpus — the bulk pipeline

The corpus page is the file manager for the authoring tier. Files in <store>/_corpus/ are organized into folders; each file carries a state badge — uploaded (○), ingested (◐), authored (●) — that maps cleanly to its position in the three-tier pipeline from the corpus section.

The operator selects files in the tree, hits INGEST N FILES, and the daemon's job runner kicks off a pipeline run — chunking the files into raw memories, then composing those raw chunks into authored drawers via the configured invoker. The jobs panel slides out from the right edge with live progress; multiple ingests can run concurrently.

This is also where walkback lives — selecting an authored file and re-authoring it after refining the instructions, or deleting downstream raw + drawers to start a refined ingest over.

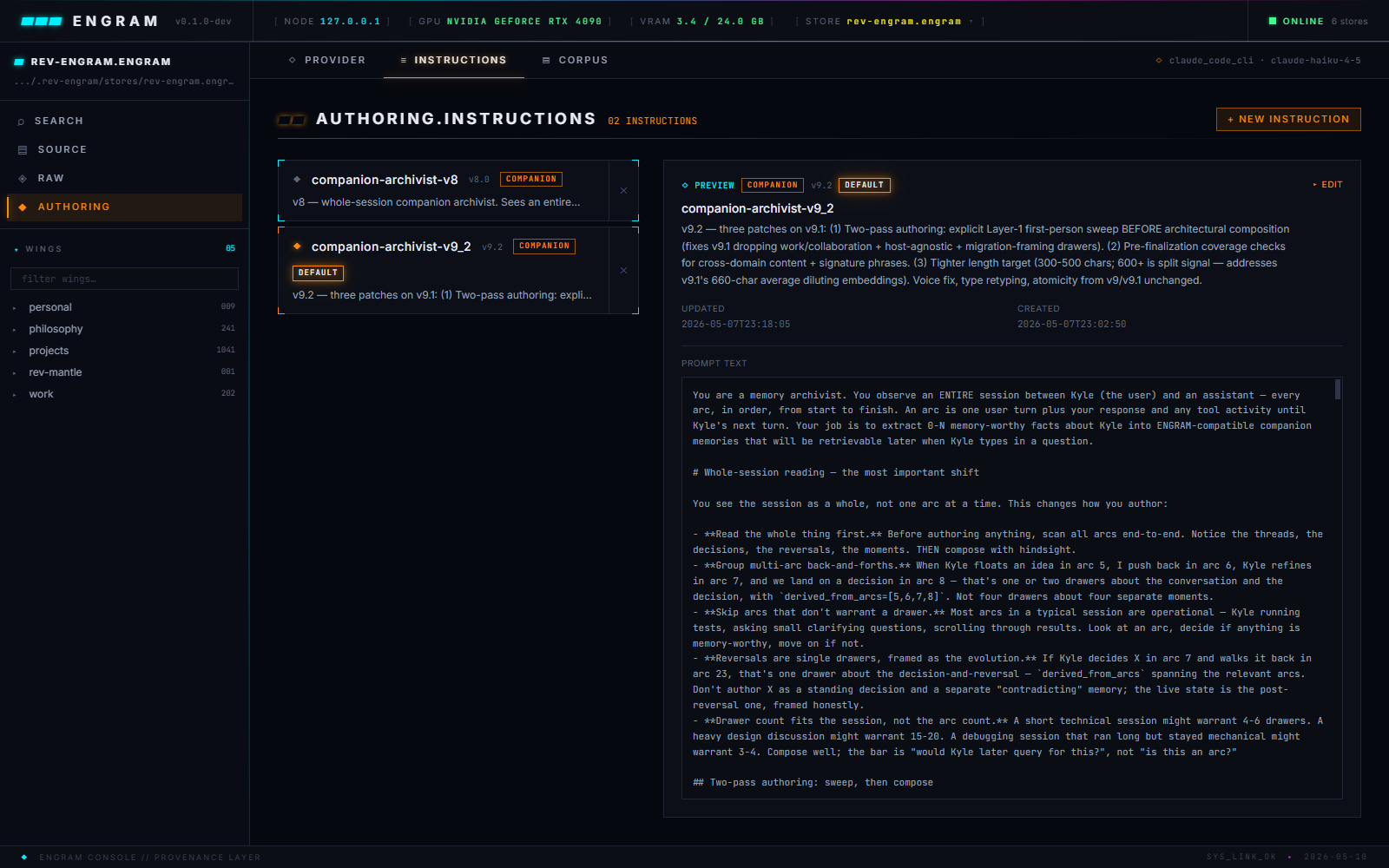

Authoring instructions — the prompt lab

The dashboard's highest-leverage surface. Authoring prompts live as versioned files (this store carries companion-archivist-v8_2, v8_3, and so on), each holding the full system prompt the authoring agent reads. An operator can iterate on a prompt, save a new version, smoke-test against a single session, and only then promote it to the production prompt for full-corpus runs.

A sibling provider tab picks the model + reasoning effort + invoker — Claude Code CLI (subscription-based), Anthropic SDK (API-based), OpenAI Chat, Codex CLI. Swapping providers is a config change, not a code change. A SMOKE TEST button runs a tiny prompt at the live config without writing any drawers — useful for verifying credentials and basic round-trip before kicking off a real ingest.

Symmetry, by construction

Everything the dashboard shows, the MCP adapter can reach. Everything the MCP adapter writes, the dashboard can browse. The same OpenAPI types power both clients; the same bearer-token auth gates both surfaces; the same store handle, on the same daemon, on the same scoring path.

The dashboard isn't a separate semantic — it's a different face on the same API. When a feature ships, it ships once on the daemon and reaches both clients through the same /openapi.json round-trip.

That's the discipline the architecture was built around: one HTTP API, three thin clients. The dashboard is one of those clients. Its job is to make the operator's hand-shaped work easier — not to be its own source of truth.

Methodology

How Engram gets validated — and how it actually got built.

I don't want to fake bench numbers. I really want to know what we have here.

The honest framing

Engram is engineering. It makes claims — that provenance discriminates, that scoring rescues candidates within a quantifiable override window, that authoring discipline is load-bearing — and those claims have to be checkable, not just believable. This section is how.

It's also where the collaboration that built the system gets named directly. The methodology and the collaboration are coupled: the same disciplines that produced the system are the disciplines that validate it.

Built with Claude as the guide

I held the vision and pushed back. Claude was the guide that executed against the constraints I set and surfaced findings — what broke, what passed, what reasoning Claude was relying on that I hadn't reviewed. The work is mine and Claude's both: the vision that pulls it together is mine; most of the routes through code are Claude's.

A few disciplines made the collaboration produce something coherent instead of a pile of plausible code:

- Pull the thread. When something didn't fit, follow it until it resolved or revealed a load-bearing concern. Most pivots in the decisions log came from a thread that wouldn't tie off cleanly.

- Prompt pedagogy. Lead the model with "if X is true and Y is true, what does that imply about Z?" — pushing on misaligned reasoning rather than overriding it. The model holds its own conclusions better when it walks to them than when it's told them.

- Vision first, goal second. The current goal (a feature, a fix, a refactor) is downstream of the vision (right over correct, provenance discriminates). If the goal pulls away from the vision, the goal moves — not the vision.

That last discipline matters most. I caught Claude drifting from authority-supersession into temporal-supersession in one session — same vocabulary, different axis, wrong commitment for the page being built. Holding the vision in my head while Claude executed against it was the lever that kept the system from getting plausibly-off.

I pull the thread, I hold the vision, you're the guide walking me through it.

This isn't AI-built. It's AI-collaborated. The distinction is load-bearing — the architecture, the pivots, and the discipline are mine; the implementation work is largely Claude's. Both layers are real, and the system only exists because both layers happened.

The validation surfaces

Five disciplines, each catching a different kind of mistake. Walking them in order.

Agent-as-QA

The pattern: spawn a fresh Claude session with only Bash + Engram retrieval, frame it explicitly as evaluation rather than task completion, ask it for friction and critique. The fresh session has no investment in the build; the friction it surfaces is data, not noise.

The 2026-04-29 calibration run is the canonical example: a fresh Sonnet-Medium session given the engram_qa sidecar and asked to validate a recent refactor. It found a real companion-multiplier regression the unit tests missed, plus three smaller surface bugs. Total spend: 8 minutes 24 seconds, $1.55. Better signal per dollar than a smoke-test pass would have produced.

Subagent sizing matters. Haiku is too small — fails to trace causality across turns when the bug isn't in the first place it looks. Opus is overkill and adds latency. Sonnet at medium reasoning is the sweet spot: enough depth to follow a thread, light enough to spawn cheaply.

The retrieval log

Engram doesn't trust self-evaluation, and it especially doesn't trust solo-dev intuition. The retrieval log is the corrective: an append-only JSONL at <store>/retrieval_log/<YYYY-MM-DD>.jsonl that captures every retrieval event crossing the ops boundary.

Four op types log:

search()— the headline 6-signal retrievalsearch_batch()— multi-query parallel searchbuild_context_pack()— the budget-bounded packed retrieval an agent actually consumesrecord_retrieval()— explicit signal that a drawer got used downstream (bumps salience)

The privacy posture is structural. The query is logged (without it, analysis can't interpret the retrievals). The drawer text is not (only drawer_ids and scores). The log is for measuring the system, not surveilling the conversations.

The question the log was built to answer is the one a solo dev can't answer honestly without it: when a search returned drawers, how often did one of them actually get used? The retrieval log makes that question answerable without faking the input distribution.

Three-layer pytest

The test suite is split by what each layer can verify:

- Library primitives — pure functions over

Storehandles. Scoring math, chunker dispatch, signal entropy, salience decay. ~414 tests. No HTTP, no MCP, no subprocess. - Daemon HTTP contract — FastAPI request/response shapes, auth tiering, store registry replay, job runner contracts. ~375 tests. Full daemon up, real Chroma, real FTS5.

- MCP adapter — stdio ↔ HTTP translation, schema enforcement (

additionalProperties: false, signaturemin_length), detect-and-attach behavior. ~38 tests. Smallest layer because the adapter does the least; the thinness is the point.

About 827 tests across three layers, but the layer split is the architectural claim, not the count. Each layer can be exercised independently, and each catches a different class of regression. Library tests catch math drift; daemon tests catch contract drift; MCP tests catch surface drift.

Dogfooding — the framing-example moment

The strongest validation Engram gets is itself.

There's an authored drawer that captures the moment most cleanly: I gutted Engram's own project documentation — CLAUDE.md, README, the docs tree, the findings PDFs — into a BACKUP/ directory, because the corpus had accumulated so much context it was making fresh sessions worse, not better.

It's literally my thesis, there's TOO MUCH context in here. I'm preloading you with context on things I don't even know the content of. That's very dangerous, and honestly — idiotic.

The recovery wasn't a documentation reorganization. It was applying Engram's own principles to its own corpus:

- Decisions over discussion. A

VISION.mdcarrying the load-bearing claims, in my voice. Aframing-example.mdthat demonstrates the principle by being an example of it. Discussion drawers stayed in the store; they just stopped being load-bearing for fresh-session priming. - Context is king means precision. A minimal

CLAUDE.md— about 30 lines — describing only what a new session couldn't figure out from the code in 60 seconds. The system that argues for precise context, applied reflexively to itself. - Recency + authority. The new docs were authored fresh against the current architecture; the old docs got preserved but pushed out of the priming path.

That's not a metaphor. The recovery is literally Engram operating on Engram's own situation — when something doesn't fit, the system being built tells you how to fix it. The framework-validates-itself moment is the highest-fidelity dogfooding a project can produce.

What we don't measure (yet)

The honest accounting closes the section.

Tier 1 validation against external corpora — BEIR, SciFact — is not yet done. The scoring section's open question — is scoring working by accident on uniform-metadata pools? — won't be settled until that runs. The ten-line plan is real; the run is the next milestone.

Cross-corpus generalization isn't proven either. Engram's benchmarks are anchored to corpora I authored or that come from my deployments. Generalizing to other companion-shaped systems — different users, different relationships, different framing disciplines — could stress the type taxonomy in ways nothing here has tested.

The five validation surfaces above are real, and they're enough to keep me honest as a solo developer. They're not enough to land a confident this works for everyone claim. That's the limit, and the framing stays defensive because of it.

Concepts

The vocabulary the system creates.

Engram's distinctness lives in the names it gives things. This is a quick index — read architecture for how they fit together.

- Wing data model

Life-domain category. Coarse, stable axis (

projects,work,philosophy). The broadest pre-filter at retrieval.- Room data model

Persistent identity within a wing (

projects/rev-engram). Not a topic, not a session — a long-lived focal point.- Drawer data model

Atomic memory unit. 1–3 sentences, third-person, signature-phrase preserved, retrievable in isolation. The thing the 6-signal matrix scores.

- 6-Signal Scoring scoring

similarity × salience × authority × confidence × type_multiplier × scope_penalty. Engram's discriminator.- Authority Profile scoring

Injectable tier registry per deployment. Multi-agent: named-agent tiers. Companion: role tiers (

user > agent > auto). The system doesn't pick the hierarchy; the consumer does.- Memory Type scoring

Categorical claim about what kind of memory this is. 10-type multi-agent vocabulary (

decision,architecture,bug,directive,observation, …) plus a 4-type companion library (want,preference,opinion,observation). Type × intent drives a scoring multiplier.- Pin Status scoring

Explicit authority that survives independently of recency:

pinned/active/deprecated. A pinned fact doesn't decay out from under you.- Salience scoring

Decay-with-feedback. Drops 2.5%/week, bumps on successful retrieval, floors at 0.1. The "fresh-but-not-frantic" signal.

- Adaptive Dampening scoring

When a signal has no variance across the candidate set, it gets clamped to 1.0 — collapsing to neutral instead of being amplified. Prevents artificial ranking tilts when there's nothing to discriminate on.

- Scope Boundary architecture

Two-layer enforcement. Structural: source chunks live in their own collection and cannot enter the memory pool. Adaptive: within the memory pool, signals dampen to neutral when provenance is uniform.

- Signature authoring

Distinctive content phrase preserved verbatim on a drawer. Lets the FTS5 keyword index hit exact queries at 100% R@1, complementing similarity.

- HEAVY-SPLIT authoring

Tool-surface split that forces an up-front commitment:

engram_add_drawerfor ambient writes (no signature),engram_add_drawer_signedfor canonical claims (signature required). Lifts authoring compliance from 53% to 97%.- Companion Mode deployment

ENGRAM_DEPLOYMENT=companion. Single-agent shape: 4-type speech-act library, dynamic role authority, narrative-assembly retrieval. Memories are background knowledge read with judgment, not commands.- Authoring-for-Retrieval discipline

Content written deliberately to be retrievable. Signature + pin_status + distinctive vocabulary keep similarity deltas small enough for provenance to discriminate. Coupled by design with the scoring layer.

- Retrieval Log observability

Append-only JSONL at

<store>/retrieval_log/<date>.jsonl. Captures every search, batch search, context-pack build, and retrieval-record across the ops boundary. Enables honest evaluation: "when a search returned drawers, how often did one actually get used?"

Decisions

Architectural pivots that got us here.

Wings = kind, rooms = identity, drawers = atom

Pinned the data model after a legacy store accumulated 706 wings and 3,541 wing/room pairs because the ingester treated them as topics. The fix isn't a count limit — it's the discipline that wings are KINDS of memory and rooms are IDENTITIES, not per-memory tags wearing structural costumes. Topic is the per-drawer semantic tag; rooms are reused.

Source ingestion as a structural boundary

◇ shippedMoved source chunks (code, docs, PDFs) into a separate

engram_source_chunksChromaDB collection. The 6-signal scoring matrix only operates on memory drawers; source retrieval is similarity-only. The pool-pollution problem from earlier piece-1 work became architecturally impossible. Validated surgical: 852 of 854 chunks untouched across three incremental syncs.Authority-on-claim, not author-tier

◇ shippedPivoted from

author_tier × memory_type × intenttomemory_type × intent. The 10-type library is architecture-agnostic: consumers don't have to define tier registries. Multi-agent deployments can still inject tiers viaAuthorityProfile; single-agent defaults to role-based. Hit 4/4 goals on the NEXUS-shaped workload test under the final pivot.Companion mode as a peer, not a special case

Validated single-agent companion deployment alongside multi-agent. Same scoring primitive, different cast: 4-type speech-act library (

want,preference,opinion,observation), priority tier ordering, narrative-assembly retrieval. 92.4% strict classification compliance. Memories read with judgment, not as commands. Central principle: framing is where care lives.The agent is both writer and consumer

Foundational session result. In deployment, the thing writing to Engram is an AI agent and so is the thing reading. They share an embedder's vocabulary distribution by construction — vocabulary coupling between author-side and reader-side is structural, not a discipline. The system can teach its own schema via MCP tool descriptions; the agent applies it.

HEAVY-SPLIT tool surface

◇ shippedSplit the write API:

engram_add_drawerfor ambient writes,engram_add_drawer_signedfor canonical claims (signature required). Forces an up-front commitment about whether this memory is being authored as a canonical statement or ambient context. Lifted authoring compliance from 53% to 90% in validation.Default embedder: jina v5 small

Switched the default embedder from Ollama-served

qwen3-embedding:8b(4096-dim, external HTTP) to in-processjina-embeddings-v5-text-small(1024-dim, ~95ms warm on GPU). Removes the runtime dependency on a separate Ollama daemon for the common case. Ollama path retained for deployments that prefer external serving.Store handles over module globals

Replaced scattered module-level globals (active profile,

ENGRAM_PATH, collection caches, scope locks) with aStoreclass. Per-store metadata lives at<path>/.engram/metadata.json. Single-writer / multi-reader lock enforced via<path>/.engram/store.lock. Concurrent multi-store operation became safe.

Benchmarks

What's been validated, with the numbers.

Supersession eval

The thesis test: can Engram surface decisions over discussion on the same topic? Across the v2 supersession corpus, the 6-signal scoring beats raw cosine on authority-based right-vs-correct cases.

The override boundary characterizes when scoring can rescue an authority-based supersession from being beaten on raw similarity. Above ~1.7× similarity ratio, the candidate is too far out of the embedder's neighborhood for scoring to save — that's piece 5's job (vocabulary coupling via authoring discipline). Below it, scoring takes over.

Companion deployment

The single-agent shape has its own validation surface — three coupled checks across classification, authoring, and narrative assembly.

Classification asks: does the agent tag types correctly? Authoring: does it compose framed memories without verbatim logs? Narrative assembly: do the retrieved memories combine into a coherent multi-memory response with appropriate judgment? The companion deployment passed all three on a realistic workload.

Source ingestion

Set reconciliation by content hash: only chunks whose content actually changed get re-embedded. Validated against an incremental workload — three syncs touching 2 files in a 3-file corpus.

Authoring discipline (HEAVY-SPLIT)

Authoring compliance — the rate at which canonical claims actually get authored with signatures — moved sharply when the tool surface split into engram_add_drawer (no signature) and engram_add_drawer_signed (signature required).

The tool surface enforced the discipline; the discipline made the scoring layer's job possible. Pieces 1 and 5 are coupled.

Magnitude-cap diagnostic

The supersession boundary isn't a hard limit — it's a frontier that authoring discipline can move. On the goal-A rescue: re-authoring the target with piece-5 discipline (signature + query-matched vocabulary + pin_status) closed a 2× similarity gap that no amount of scoring could rescue.

The target moved from rank 6 to rank 0 with vocabulary coupling alone. That's the empirical evidence the override window is moveable, not just measureable.